The Singularity Is A Mirror

There’s a growing obsession with Artificial General Intelligence (AGI), the idea of machines that can think, reason, and act like humans. Some believe it will be the most significant breakthrough in human history. Others warn it will be our last — the catalyst that brings the Singularity into existence.

The Singularity refers to a hypothetical future moment when artificial intelligence surpasses human intelligence and begins to improve itself at an exponential rate, beyond our understanding and human control. It’s often portrayed as the point when machines become so smart and so capable that they can design their successors, and humans become obsolete, irrelevant, or even endangered.

AGI won’t spring fully formed from nowhere. It will be built by people. It will reflect our incentives, ambitions, blind spots — and our flaws. It will be trained on data created by us, governed by rules we design (or fail to design), and used for purposes we either endorse or conveniently ignore.

If AGI destroys humanity, it won’t be because the machine chose to. It will be because humans built it in a world where profit trumped ethics, power went unchecked, and accountability was optional.

AGI won’t decide what kind of world it steps into.

We do.

Tools, Not Threats

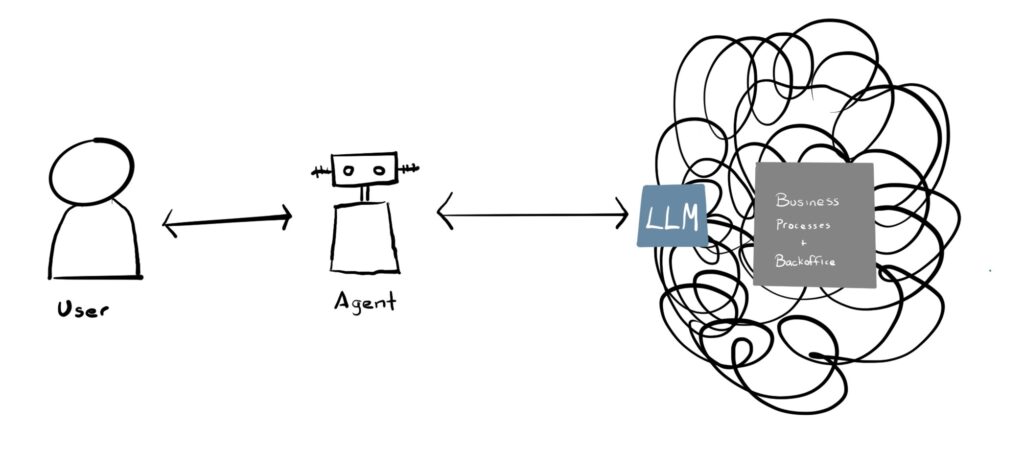

We talk about AI as if it’s an external threat — an alien intelligence that might turn on us. But AI isn’t alien. It’s Made with ♥ by Humans. It’s a tool. And like any powerful tool, it can build or destroy, depending on whose hands it’s in and what they choose to do with it.

AI is fire in a new form — and we’re the children playing with it.

If the house burns down, you don’t blame the fire. You blame the child who lit the match or the parents who never taught them that fire was dangerous. You ask who left gasoline sitting around. You question why no one thought to install a smoke alarm.

AI doesn’t operate with intent. It doesn’t choose good or evil. It carries out the tasks we assign, shaped by the values and choices we embed in it.

When AI generates misinformation, invades privacy, replaces workers without a safety net, or amplifies bias, it’s not the algorithm acting alone. It’s people designing systems with specific incentives, deploying them without oversight, and looking the other way when the consequences show up.

The danger isn’t that we won’t understand AI.

It’s that we won’t take responsibility for how we shape it and how we use it.

The Real Threat: Human Decisions

The real threat isn’t artificial intelligence.

It’s human intelligence.

We’ve already seen how powerful AI becomes when paired with human intention. Not superhuman intention — just ordinary political, ideological, or economic motives. And that’s the danger: AI doesn’t need to be sentient to cause harm. It just needs people ready to use it irresponsibly.

Just in the past few weeks, headlines have shown how AI misuse is rooted in human decisions.

A political report, touted by the MAHA (Make America Healthy Again) movement, questioned vaccine safety and included dozens of scientific references. However, fact‑checkers discovered that at least seven cited studies didn’t exist, and many links were broken.1 Experts traced this back to generative AI platforms like ChatGPT, which can produce plausible but completely fabricated citations.2 The White House quietly corrected the report but described the issue as “formatting errors.”3 AI didn’t decide to deceive anyone—it simply enabled it.

When xAI’s chatbot Grok flagged that right‑wing political violence has outpaced left‑wing violence since 2016, Elon Musk publicly labeled this a “major fail,” accusing the system of parroting “legacy media.”4 Instead of questioning the data or method, Musk implied that any answer he doesn’t like must be ideologically infiltrated. He’s saying, “If the tool makes me look bad, the tool is broken.” This isn’t AI gone haywire — it’s a machine bent by human vanity, then reshaped to serve its creators’ agendas.

This isn’t a partisan issue. Misuse of AI spans the political and corporate spectrum. In 2024, a consultant used AI to generate robocalls impersonating President Biden, urging voters in New Hampshire to stay home for the primary — a blatant voter suppression tactic that led to a $1 million FCC fine.5 The Republican National Committee released an AI-generated ad depicting a dystopian future if Biden were reelected, complete with fake imagery designed to provoke fear.6 And major oil companies like Shell and Exxon have used AI-generated messaging to greenwash their climate record — downplaying environmental harm while projecting a misleading image of sustainability.7

These aren’t tech failures. This isn’t about ideology.

They’re ethical and political failures. It’s about power, and our willingness to let it go unchecked.

AI reflects its users’ values, or lack thereof. When we let political actors exploit AI to mislead, distort, or conceal, we aren’t witnessing a feature of AI. We’re exposing a feature of ourselves.

The danger isn’t in the machine.

It’s in our refusal to confront how we wield it.

Power and Responsibility

AI doesn’t live in the abstract. It lives in systems, and those systems are run by people with power.

The question isn’t just what can AI do? It’s who decides what it does, who it serves, and who it harms.

Right now, power is concentrated in a few hands — governments, tech giants, billionaires, and unregulated platforms. These are the people and institutions shaping how AI is built, trained, deployed, and monetized. And too often, their incentives are misaligned with the public good.

When political actors use AI to fabricate legitimacy or manufacture doubt, that’s not the future acting on us. That’s us weaponizing the future.

When Elon Musk can personally shape what information an AI does or doesn’t show, that’s not innovation. That’s the consolidation of narrative control.

When we gut public education, weaken institutions of science and journalism, and leave people unable to critically assess the information they’re being fed, AI becomes a distortion engine with no brakes—not because it’s evil, but because we’ve stripped away the tools to resist its misuse.

We have to ask who benefits, who decides, and who gets to hold them accountable. Because as long as the answer is “no one,” the story doesn’t end with superintelligence. It ends with unchecked power, amplified by machines, and a public too distracted, divided, or disempowered to intervene.

Our Future Is Still Ours

We’re not doomed.

That’s the part people forget when they talk about AI like it’s fate. As if the rise of AGI is a cosmic event we can’t shape. As if the Singularity is already written, and we’re just watching it unfold.

But we’re not spectators.

We’re the authors.

Every day, in every boardroom, government office, university lab, and startup pitch deck, people are making decisions about what AI becomes. What it protects. What it threatens. Who it includes. Who it erases.

That means the future is still open. Still contested. Still ours to shape.

We can demand accountability. We can invest in public institutions that inform and protect. We can teach our children how to think critically, how to recognize misinformation, how to ask better questions. We can regulate the use of AI without killing innovation. We can fund alternatives that aren’t controlled by billionaires. We can insist that progress isn’t just what’s possible, it’s what’s ethical.

This isn’t just about AI. It’s about us. It always has been.

We’ve been handed a powerful tool. It’s up to us whether we use it to illuminate or incinerate.

It’s not AI that will destroy us.

It’s us.

Note: There are a few good reads on the topic, including The End of Reality by Jonathan Taplin and More Everything Forever by Adam Becker.

- https://www.politifact.com/article/2025/may/30/MAHA-report-AI-fake-citations/ ↩︎

- https://www.agdaily.com/news/phony-citations-discovered-kennedys-maha-report/ ↩︎

- https://theweek.com/politics/maha-report-rfk-jr-fake-citations ↩︎

- https://www.independent.co.uk/news/world/americas/us-politics/elon-musk-grok-right-wing-violence-b2772242.html ↩︎

- https://www.fcc.gov/document/fcc-issues-6m-fine-nh-robocalls ↩︎

- https://www.washingtonpost.com/politics/2023/04/25/rnc-biden-ad-ai/ ↩︎

- https://globalwitness.org/en/campaigns/digital-threats/greenwashing-and-bothsidesism-in-ai-chatbot-answers-about-fossil-fuels-role-in-climate-change/ ↩︎